For the entire year, I counted down the days until I would get to go into New York City every day for seven weeks and participate in the Girls Who Code Summer Immersion Program. When I first learned about the program, it sounded like a dream: I would learn how to code in Python, learn about web development, learn the basics of data science, and gain exposure to robotics, all things I had been wanting to learn for a long time. In addition, I would do it while making friends with people with similar interests to mine, and spend the Summer in NYC with them. But the day was quickly approaching, and at a certain point, I knew that it was going to be made virtual (if not cancelled). I tried to imagine what it would be like. Would I get to work with my assigned company that usually mentors the program members or have as many workshops as before? Would I gain hands-on experience? Would I still get to make friends? The truth was, when I heard the program had been shortened to two weeks long for just an hour and a half class each day, I wasn’t too optimistic. I saw that I would only learn web development using HTML/CSS/JavaScript, and I thought it was going to be a waste of time and that I should have waited a year to apply. Luckily, Girls Who Code proved me wrong. After completing the program, I feel like I’ve learned not only how to use the languages we learned , but how to think. The first week we learned the basics of HTML/CSS/Javascript, and the second week we created our own activism websites. I loved the resources that Girls Who Code used to teach some of the more difficult concepts. For example, I clearly remember playing a layout game to review Flexbox for CSS, which helped me clearly understand the capabilities and how to categorize different items so I could display them the way I want on my website. A specific coding trick that one of my teachers taught me was using “inspect element” to troubleshoot. I had used inspect element before when trying cybersecurity, but I realized that there were a lot of other uses for it when building a website. I could highlight parts of the box model to check for problems and see how to fix them. In terms of working with our sponsor company, our company, Moody’s, had two workshops prepared for us. One was a resume workshop where we were taught how to structure a perfect resume and how to explain your skills and experience in a way that shows exactly what you are good at and what you have learned. The second workshop was an AI workshop, which I thought was presented very well. It covered the basics of AI, and used the Amazon Alexa to explain applications. Because the example was something that most people were familiar with, we were able to truly understand how AI is used. We had a brainstorming session to come up with ideas and got to see some of the product’s code in action. Overall, I enjoyed the workshops and think they were executed perfectly. Finally, I want to touch on how well Girls Who Code connected the students and encouraged us to work together. Every morning, we had “Sisterhood Activities” to bond with each other, my favorite being one where we played the first few seconds of a song and had to guess what it was. However, the best activities were the small group feedback sessions where we were able to look at each other’s projects, give advice, and get to know each other. Through these short meetings, I was able to meet so many nice people, who helped me throughout the program and that I know I will stay in touch with in the future. I definitely recommend sticking with things, even if plans are changed. It was still something I looked forward to every morning and definitely would have regretted not participating in this year. I am so thankful for the skills I gained and the friendships I made.

0 Comments

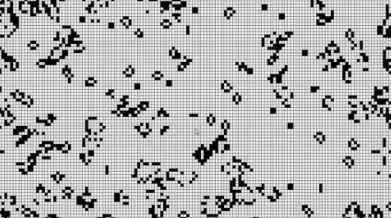

Whenever I visit my grandfather, he has a puzzle sitting on his small desk in the corner of his apartment, often with a finished border and nothing more. I watch him work as certain sections start to build up, one puzzle piece turning into two, and then into ten, and then into fifty, until areas come together, and eventually the puzzle is finished. I would complete the same set of rules over and over again: scanning the board, finding a spot with a missing piece, looking at the disconnected pieces that could fit, testing them to see if they were correct. I loved the idea that this simple set of steps resulted in something that seemed so complex and worthwhile. I tend to think of my grandfather’s puzzles when I talk about Cellular Automata, and maybe after I explain it a bit, you will too. Cellular Automata consists of a grid of filled-in or empty boxes which are programmed to evolve based on how the boxes are placed. They have a certain set of rules that fill in new boxes or clear old boxes to create different complex patterns. For example, the program may analyze a set of four boxes in a “T” shape, shown below. If certain boxes are filled in, it will activate the program, which contains rules of what should happen next. Let’s say the program says that if no two consecutive boxes form a horizontal line, the boxes surrounding it will then fill in, but if there are at least two consecutive boxes forming a horizontal line, the program will not run and instead the entire section will disappear. Still confused? Probably. Here’s another visual to help. You can now see a few possible combinations and their outcomes. The 1s on the bottoms indicate that the conditions are met, while the 0s indicate that they are not. The program with filling in outer boxes will run if the program yields a 1, but will remove all of the boxes if it yields a 0. It’s that simple. Usually, you will see something more like this. The program is simultaneously analyzing every box set and carrying out the rules based on which boxes are filled in throughout the grid.

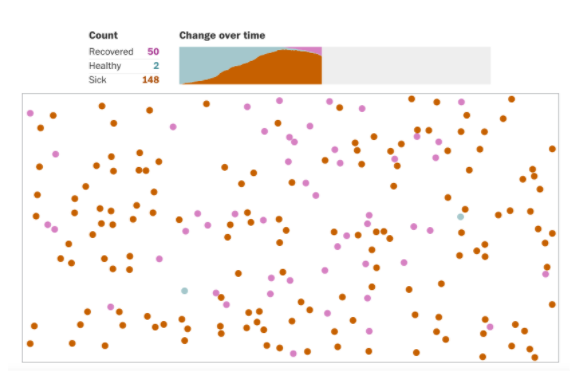

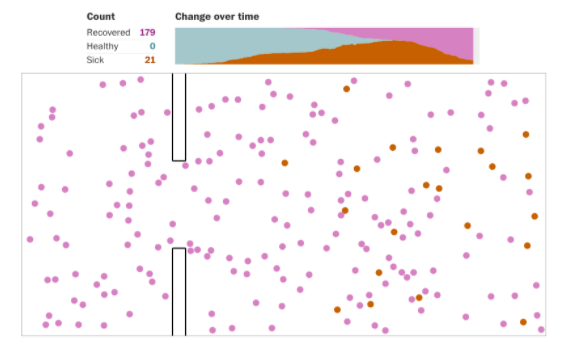

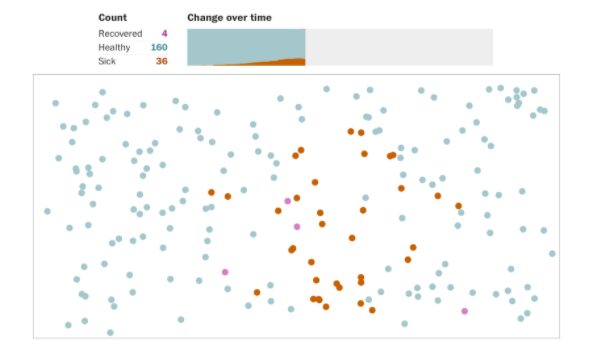

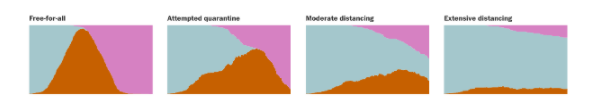

John Conway’s Game of Life is a perfect place to see this in action. You are able to choose which boxes to start with and run the program to watch your creation evolve. Below the program is the list of rules that it follows, and you are able to adjust the scale of the grid and the speed of the program. I encourage you to play around with it and challenge yourself to find starting shapes that “stay alive” for the longest. Here is the link: https://bitstorm.org/gameoflife/ The uses of Cellular Automata are endless. One example is using it for random number generation for cryptography, where the changing configurations can serve as the random sequences. However, it is most often used to model biological systems and natural occurrences, from the formation of snowflakes to analyzing gas behavior. It can also represent the formation of artificial life and be used to understand self-reproduction emerging from the right conditions. Self-reproduction is modeled by the creation of certain components within the program due to the conditions, and I believe that it will eventually evolve into modeling how simple organisms are created from practically nothing. There is a lot to learn from the concept of Cellular Automata and I have no doubt that the evolution of the idea will be extraordinary. Sources: https://mathworld.wolfram.com/ElementaryCellularAutomaton.html http://www.mjyonline.com/CellularAutomataUses.htm#:~:text=Cellular%20automata%20can%20be%20used%20directly%20to%20create%20visual%20or,model%20physical%20and%20biological%20systems. https://bitstorm.org/gameoflife/ “Today, even school children are taught that the machine is a tool for creating new worlds, not simply something for probing and/or modifying the existing one.” While reading John L. Casti’s Would-Be Worlds, this line stood out. It spoke to me directly, challenging an idea that I previously believed was common knowledge. I live in a world full of computer fanatics. Both my mom and my dad were computer science majors, and my aunt is an accountant. I go to an engineering-focused high school, where half of my grade majors in electrical and computer engineering, and the other half uses computer software for CAD modeling. The people around me process and create things using machines. In my eyes, and I assume many of yours, creation is the primary purpose of a machine. However, computers do more than simply process data to provide results. They can be used to recreate real-world problems in a controlled environment to help us make decisions about future events. They are able to render simulations. The first ideas that come to mind when discussing simulation are video games. These are advanced computer programs that allow users to make decisions following a set of rules that affect what will happen next. This same concept is used in scientific simulations to predict the future. Let’s take a look at a simple example. Biomorphs are an early simulation idea described by Richard Dawkins in his book, The Blind Watchmaker. These computer-generated structures, inspired by real animals, are surrounded by slightly mutated shapes. The user has the option of choosing one of the surrounding structures to change it, and the process is repeated as they continue to choose the mutated shapes. In other words, the person is controlling how the structure evolves, including its width and length, shape, and details. The concept revolves around the idea of the evolution of organisms. Here is an example of what I mean: In this simulation by Emergent Mind, the central structure is the original “animal”, and the surrounding structures are the slightly mutated animals. Being able to analyze these computer-generated models is useful if humans know which natural factors result in each of the specific mutations in the real world. It allows scientists to understand what different organisms evolved from and what they may evolve into in the future. In comparison to its more modern counterparts, this biomorph simulation is fairly simple. It was described in a book published in 1997 when technology was not nearly as advanced as it is today. Simulation has evolved over time to tackle a variety of problems that more directly impact our everyday lives. For example, simulations are currently being used to predict the spread of COVID-19. Only using available data to understand past trends is not enough—it’s important to use simulations in order to predict the spread of the disease. The Washington Post is using mathematical data to simulate how the virus would spread over time. They modeled the small town of Whittier, Alaska using a set of rules to figure out how quickly people recovered and how many people were healthy and sick. Here is a snapshot of the simulation: A forced quarantine was implemented in the simulation to show the impact of isolation. Here is what they came up with: What about social distancing? This is more difficult to show, because the simulation shows most of the dots staying still, while some are in motion. When a dot that has the disease comes into contact with one that does not, they transmit it, and the spread grows exponentially. The model began to look like this: And finally, a model that shows the comparison of my results after playing each simulation is rendered at the end: It is important to remember that the results generated from these simulations are random. After the simulation is completed, I can replay it, but I will have slightly different results. With repeated testing, however, it becomes clear that the basic trends will emerge, considering the conditions are the same, and the common results can be interpreted as probable future outcomes.

If we simply made calculations from data, it would result in a single number or a range prediction, which, depending on the complexity of the situation, can often be inaccurate. Simulation allows us to take conditions, rather than results, and see how things play out in those conditions. Having always been one who needs to have a full understanding of a topic for it not to bother me, biology was never my thing. It felt like pure memorization to me, because I never really did comprehend what was happening and why. My dad handed me this book called “Would Be Worlds”, with the caption on the front, “How Simulation is Changing the Frontiers of Science”. I thought, this seems interesting, simulation uses patterns to create models that mimic different concepts. However, I became less thrilled when biology was mentioned in the second chapter. Richard Dawkins of Oxford University… genes… natural selection… I’ve heard it all before. Living systems… mutation… biomorphs... What the heck is a biomorph?! As I mentioned before, I hate not knowing things, not understanding things. I read the page about biomorphs, and I read it again. “In Biomorphland, one begins with a kind of stick figure. This skeletal object is then mutated in accordance with a set of rules, creating a spectrum of offspring that are each determined by a single genetic mutation” (Casti 38). I stared at the diagram on the next page. I understood that it was a simulation of natural selection. That was about it. I looked up the term and found plenty of extremely technical articles on how biomorphism worked. I still didn’t quite understand the concept. That was until I came across a website simulation. It started with a random, skeletal shape, and it had slightly mutated shapes around it. You could choose which shape you wanted to start with next, and the process would continue to repeat as you chose slightly mutated shapes. Let me show you what I mean. Here is an example simulation, which I found on http://www.emergentmind.com/biomorphs. The middle skeleton is the starting shape, and you can click on any of the outside shapes to start with for the next round. Each of the surrounding shapes has a small mutation, and you are the person controlling which mutations “survive”. I played around with this for a very long time. It was fun trying to make the frog-like skeleton evolve into something specific. For example, I tried to make changes so it would become as close to a straight, vertical line as possible. Here’s what I came up with. I think I succeeded.

But after challenging myself to create skeletons that I pictured in my head, I wondered what the practical application of this simulation was. The simplicity of these shapes do not even come close to mimicking organisms on Earth today. As Would-Be Worlds put it, “Biomorphs do not actually do anything.” So what can we learn from studying biomorphs? The simplest answer to this question is the evolution of the shapes of organisms. If we can make predictions of what natural factors result in specific mutations, we can understand what different organisms evolved from and what they may evolve into. However, the fact that we are able to create this type of evolution on a computer screen provides us with hope for the future of simulation. Already, more advanced concepts are being formulated, and we are starting to utilize technology more to predict or explain something about real-world problems. Let me know if you think of other ways that simulation is being used as a model to predict the future. I’d love to hear your thoughts.  My fridge is full, yet I’m still overwhelmed. I’m overwhelmed because it isn’t filled with what my family typically buys. I’m overwhelmed because there are things that we have to conserve which we wouldn’t have been careful about before. Amidst the global pandemic, we are forced to change our ways of living, because we don’t have access to all of the resources we used to rely on. But, we are fine. We are able to re-purpose what we do have access to and use it efficiently. However, there are doctors that do not have access to the medical tools that are necessary to keep themselves safe from their infected patients. They are constantly exposed to the virus and need more than cloth masks to protect themselves. The problem is that there is a lack of medical equipment made specifically for a global pandemic, like COVID-19. As the disease becomes more widespread, fewer doctors have access to helmets and masks to protect themselves and to contain the disease. I think that it is interesting that colleges and universities possess a tremendous amount of technology and have access to materials that could benefit healthcare workers. Instead of starting from scratch, they are able to repurpose the technology they already have to develop helpful innovations. For example, Duke University turned a surgical helmet into a powered air purifying respirator, which is essentially an air filtering mask. The Duke engineers who contributed to the project had a goal of combatting the lack of medical equipment. Surgical N95 respirator face masks are personal protective equipment (PPE) usually used by healthcare workers to protect themselves, but many hospitals are experiencing shortages of this item. Orthopedic spine surgeon, Melissa Erickson, one of the project contributors, decided that if there were national shortages on PPE, it would make sense to make modifications to equipment that is already used in the hospital. Attaching a filter to a helmet usually worn during arthroplasty surgery integrated technology that was already available and new components made of accessible materials. Their PAPR (filtered personal protective equipment) was an alternative to PPEs that provided equivalent or greater protection to health care workers. The filter that is added to the surgical helmet is 3D printed using Duke Innovation Co-Lab’s Formlabs printers. It was remodeled and tested four to five times before being completed. After it was finished, it was tested by the HEPA certification company, Precision Air Technology, before care providers began using it. I reached out to Professor Ken Gall for further inquiry about the engineers’ future plans for the newly developed technology. After asking if the masks are being delivered to hospitals outside of Duke, he responded: “Right now they are being delivered only to Duke, but we are sharing designs with other hospitals so they may possibly make some for their own facility.” He also gave insight into how the PAPR compares to the N95 mask commonly used. “The filters are similar to what is used on N95, but not exactly the same configurations. The PAPR suit hack (the design transforms a Arthroplasty suit to a PAPR) covers much more of the health care worker than a N95 mask. PAPR suits, both the original ones and our transformed Arthroplasty suit, offer much more protection than an N95 for riskier procedures like intubations. But there are not enough of them to replace N95 masks.” In addition to Duke, the University of Michigan created a repurposed industrial respirator to help in the hospital when testing and treating Coronavirus patients. While Duke’s masks are made for workers, UMich’s masks are intended for patients to use. The helmets serve the purpose of protecting healthcare workers and safeguarding the hospital systems, allowing therapies to be delivered outside negative pressure rooms. Negative pressure rooms are important because they allow outside air to enter from a segregated environment. However, limited access to negative pressure rooms called for technological changes to be made. Inspired by an astronaut helmet connected to a hose, the UMich researchers developed a new, portable, mass producible helmet system that creates negative pressure inside of it, which protects caregivers and saves ventilators for critical cases. The helmets allow treatments to be delivered outside negative pressure rooms and still isolate patients from healthcare workers, in order to prevent the spread of the virus. The helmet was built mostly from commercially available parts and was tested by clinicians on the ability to speak with it on and functionality of it. The UMich researchers were able to receive feedback from the clinicians, before testing them with patients under supervised protocol. Now, they have developed successful helmets and their goal is to spread them among healthcare workers as fast as possible. They are working with industry partners to increase production and reach their goals during the pandemic. Learning about all of this makes me think about how I could be helping during the pandemic. Universities have access to so much more technology and advanced resources than we do in our households, but it doesn’t degrade from the fact that we can at least appreciate what we do have. The idea of repurposing is something we can consider during a time where we can’t leave our house and stop at the store casually. Most importantly, we can appreciate the help and the sacrifices Universities, companies, and individual people are making to bring the world back to normal. Last fall, I interned as a data analyst at a company called Ometry with a few goals. First, I was interested in their mission to interpret and create models based on car crash data, in order to predict where crashes are most likely to occur. To put it simply, I wanted to be involved in helping save lives. In addition, I wanted to gain experience working with data because I feel that it is such a useful skill in discovering solutions to everyday problems. I had completed research on Smart Cities the previous summer and found it interesting how the technology they used collected data and functioned in a certain way based on it. Helping Ometry would give me the knowledge to further investigate the goals of smart technology and discover other ways data could be used to improve the world.

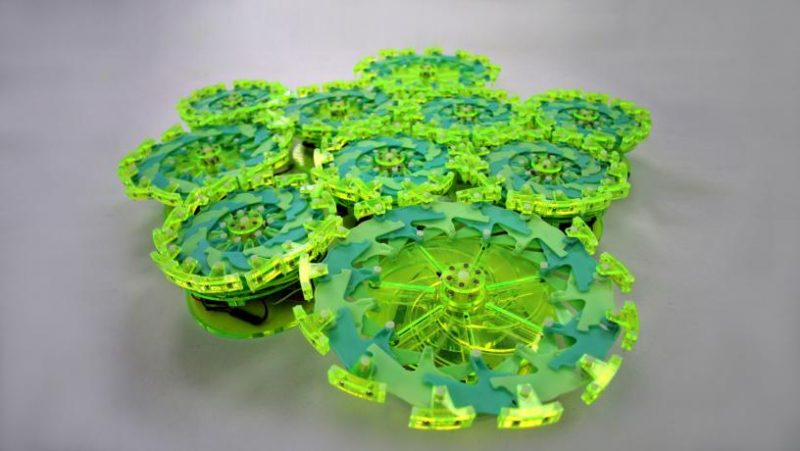

Being the only high school intern and having limited professional experience, working with other interns who attended top colleges, such as Columbia University and Georgetown University, was intimidating. The data analysts had group calls with the founder and CEO of the company every Tuesday and Friday, in addition to a full team meeting (which included the Data Science team) every Monday. During each of the calls, we would discuss our progress and the strategies we used to find data. Little did we know at the time, there would be struggles that we would have to overcome. The job of a data analyst at Ometry was to find Car Crash, AADT (Annual Average Daily Traffic) data, Roadway Network data, and Roadway Characteristic data, for each state they were assigned. However, finding data was not as easy as it seemed because of the specific characteristics each data set had to possess. Car crash data had to include XY coordinates for each individual crash. Roadway Characteristics had to include specific traits of each individual road, such as width, road type, incline, etc. All of the data needed to cover all roads in the state, rather than just major roads or highways. Finding data that met these requirements required us to look through each data set, interpret the variables we were looking at, and decide whether it met some or all of our needs. We then created ReadMes, or summaries with links to where we stored our data, and recorded important aspects about what we found. A lot of the time, we would upload the data to an online mapping program called ArcGis and use it to visualize where the roads were and where the crashes happened along those roads. Then, we would screenshot the map and attach to the ReadMe. The two types of files we worked with were CSVs and Shapefiles. CSVs were formatted like spreadsheets and were typically easy to analyze. We would record the variables given and write short descriptions of their meanings as needed. Shapefiles were more difficult to analyze because they were zipped files containing separate files of different information. Typically, we would open a specific PRJ file (Shapefiles contain many different smaller files, one of which is the PRJ file), and record the geographic coordinate system and projected coordinate system, important information describing the orientation when looking at the data plotted on a digital map. I’m sure this sounds a bit confusing to anyone who is reading it that has never worked with these types of files before, as it did to me when I first started the job. However, it eventually became easier as we reviewed our ReadMes as a team and discussed our progress with each state. Asking questions was especially important in understanding how to go about each task and allowed me to better understand what I was doing, rather than just opening random files and recording information. One of the most significant things I learned from the job was the importance of asking questions. Although at first I was afraid that it would make me look less knowledgeable, I later realized that everyone can learn from the questions I ask and most likely, many people are wondering and struggling with the same tasks at the beginning of a job. The point of taking any internship, or really anything you do in life, is to learn. Asking questions shows that you are paying attention to details and that you actually know what you are talking about, rather than that you are completely clueless. I started with the state of New Jersey, which I worked on continuously over the entire Fall Semester, which overlapped with other states that I finished much quicker. Finding all of the required data for a state could take days to months, depending on how much data the state makes publicly available. If the files could not be found on general websites, such as the Department of Transportation website for that state, we had to look for other sources that could be storing the data. This often included public universities and other people who had received the data in the past for projects that they were working on. Finding the data required many emails to be sent and many calls to be made, many of which were never answered. My first email to the New Jersey Department of Transportation, requested car crash data, was immediately returned with a message informing me that the head of the department would be unavailable from September to January. New Jersey was a struggle mainly because of the car crash data. While AADT data and roadway network and characteristics were fairly easy to find, the NJDOT page that held the car crash data seemed to be broken. It possessed a table with a dropdown menu where car crash data could be selected, but after choosing any choice, the page would just reload and not download or display the data. After never receiving responses to my emails, I decided to give them a call. I was told that the person I should talk to was out on a lunch break and when I called again an hour later, I was told that they went home for the day and they would call me back in a few days. I never got a call back (surprising, I know). I even found a company that had used the data in the past and asked them where I could get it from and they just redirected me back to the same page. It seemed as though this was the only place the data was kept and it was extremely frustrating to know it existed but impossible to retrieve. I held off on New Jersey because of this, and continued on with the states of Connecticut, Colorado, and Georgia. Although it took many contacts, I was able to retrieve all of the data for Connecticut and Colorado, and most of the data for Georgia by the end of the semester. I came across the problem of finding roadway characteristics that actually described the physical characteristics of the road, rather than just the exact position and road length. It was also difficult finding data that included smaller roads, in addition to the major roads and highways. Uploading the data to ArcGis was helpful when identifying if all of the roads were covered when the data did not explicitly label which roads were highways and which were local roads. All of the data for each state needed to be “model ready”, which meant that it could be processed by the data scientists, and though it was somewhat stressful, it was understood that some states took longer than others. However, New Jersey continued to bug me. I wondered how it was possible that the other company was able to retrieve the data in the past, and why the page reloaded every time I chose an input from the drop down menu. It seemed as though it should be so obvious, yet it wasn’t. I explained the problem to my boss and the other interns who attempted to look at the page and solve it, but none of them could. That was until one day, one of my coworkers called a phone number of a worker from the Department of Transportation that I found, and the person on the call described each exact steps of how to retrieve the data. In passing, they mentioned, “I am opening Internet Explorer”... and that was when we all realized what we had been doing wrong. We never thought of using a different browser. We had all been using Google Chrome the entire time, and as obvious as it seemed afterwards, it never occurred to any of us that this could have been the problem. The unanswered emails and workers going out to lunch and never returning led us to believe that the website really was ‘trash’, but we were wrong. And though we felt silly for never thinking about trying this, we were more relieved that we had finally solved the problem. Now, I didn’t have to mention this somewhat dumb mistake I made, but I really do believe it sums up a lot of what I learned from the job. As mentioned earlier, I realized that asking for help is always a good thing, and people will not mind it, as long as you are trying your best and learning from your mistakes. I also learned about troubleshooting and how sometimes what seems like the most complicated problems actually have simple solutions. If we had taken a second to breathe and walked through the process of what we were doing, just as the person on the call had done, we could have figured out the solution on our own. Finally, I learned that it is okay to struggle, because I would have never had these realizations if I never had this problem. Instead of being clueless of how to go about a situation like this in the future, I will know how to analyze the problem. I realized that this is what happens in the real world, and when I thought about it more, I realized that subconsciously, I am already experiencing situations like this in my everyday life. When I am studying for a math test in school and I don’t understand how to answer a problem, I take it step by step to figure it out and I take breaks to rest my mind (which is often where I have realizations about how to approach it). Similarly, when I am at my dance team practice and I am having trouble remembering choreography, I watch others and pay close attention to their movements, while also asking for help when I need it. It is not one but many skills needed to overcome obstacles in everyday lives, and while no one is exactly the same, many have very similar approaches. So, I advise you to get experience in a field you are passionate about. Even if it seems intimidating, or scary, or impossible. It really did ignite my passion for data analytics, and it inspired me to continue learning about data and computer science, along with Smart Cities and engineering in general. You never know what you may be interested in unless you try it, and even if you realize nothing more than that the career is not for you, you will have taken away many good experiences and learned many lessons from what you did.  Engineers have the goal of creating a soft robot that could be used in delicate tasks, a lot of which involved direct interaction with humans. These robots are made of a material that is strong enough to function, but still soft enough to be safe and to complete its tasks carefully. Some engineers found that using self fixing technology would be extremely beneficial for soft robots because they are more likely to break than other robots, considering they are made of a more delicate material. By using self fixing material, they could be more independent and if they were in the middle of a task, they wouldn’t need human intervention to fix them and continue. Here is a link to my talk on Self Healing Robots: https://www.youtube.com/watch?v=hLMRvjoH_lc . In the video, I describe the importance of the technology, how Self Healing material works, and possible uses. I've further elaborated on the content below. In general, the material is made of an elastic polymer, also known as an elastomer, embedded with tiny liquid metal droplets. When it is heated, the material expands, due to the droplets rupturing and forming electrically conductive pathways. This closes the areas that need sealing. As it cools, the polymers form a covalent bond and close the gap. Currently, the most significant problem with the idea is the fact that it takes a long time to heat the entire material. However, self healing technology could eventually lead to breakthroughs in many different fields. Currently, different forms of the material itself, such as self healing rubber, is being used to make a variety of innovations, including tires that are immune to permanent punctures. Engineers at the University of Cambridge are also working on a self healing innovation: soft robotic hands made from jelly like plastic, which may be the solution to human needs. They are able to sense damage and patch themselves up without human intervention. While they are able to create new bonds within 40 minutes, the next step is to embed sensor fibers in the polymer to detect where damage is located. In the future, the material can be used to make more capable robots with different functions. For example, prosthetic hands would greatly benefit from the ability to fix itself. It would require similar functionalities as a human hand and if it gets cut when holding something sharp, it can heal itself and return to its normal state. This technology would also be a great addition to climbing robots (a type of robot being worked on at Harvard) that can save people from fires. It can quickly restore the polymer bonds that were damaged and would be able to continue the rescue mission. What is even more interesting is that the material can be used for “Edible robots,” which are biodegradable robots that can safely deliver medicine to different parts of the body. When the robot enters the body, humans can no longer help it, so it needs to be independent and able to complete the task on its own. The self fixing technology would make it possible for it to overcome its malfunctions and continue on. I am excited to see where this technology is used next. The Self Stimulator Robotic Arm is an example of a robot that can learn to adapt to changes in its physical composition. The robot being worked on at Columbia University actually knows when and where damage occurred and can learn to adapt to changes based on the data it collects. The robotic arm uses deep learning to create a self model, which is a model of its mechanics without any prior knowledge about physics, geometry, or motor dynamics. At first, it moves randomly and collects trajectories, or data on its path of motion, each path comprised of 100 points. In other words, it makes a 3D graph with many lines of motion and interprets it to understand how its joints are connected. Because the robot can understand how its functioning without having prior knowledge, it can learn function with missing parts. Think about when you breath. You don't have to be taught, and when you become congested, you adapt by learning how to breath out of your mouth more instead of your nose. This is similar to how the robotic arm doesn't need any "How To"s on functioning properly, and how it can adapt without being taught or needing assistance. Columbia engineers tested their robot and found by giving it a "picking and placing" task. Within 34 hours of training, the Self Model Robot showed a 44 percent success rate and was consistent within 4 centimeters when picking up the balls placed. They also tested the robot's ability to detect changes in its morphology, such as damage. This was tested when the engineers replaced a perfectly functioning part with a slightly deformed part, and the robot automatically detected the change and remade its self model, and resumed its task. The robot's human qualities and abilities make it close to a form of AI, yet it analyzes data to be able to function. The innovation proves how mechanisms, programming, and data analysis can come together to create such an advanced product, which may possess features that all robots will have in the future. Watch the robot in action here. If the topic of robots adapting to their own malfunctions interests you, check out my article about the Particle Robot. This robot doesn't use data to understand how to function like the Self Aware Arm does, but it can continue functioning even when parts break.

I want you to think about how people function after breaking their leg. Although they are not able to move as quickly, they are still able to walk around by using crutches and their other foot to assist them. This is the idea that inspired engineers to create robots which can adapt to their own broken parts. Recently, I've been fascinated with robots that can continue their tasks even after malfunctions. The Particle Robot being worked on at Columbia University, MIT, Cornell University, and Harvard University is a great example of how this is possible. Each unit consists of a

Each part is only capable of simple back and forth movements independently, but to actually move, the particles must be connected. The robot is made of small, microscopic parts that are dependent upon each other to function and when one part malfunctions the rest can continue. The particles are loosely coupled and connected by magnets on very small tentacles attached along their perimeters. If the magnets were placed directly on the robot, the connection would be too rigid and the robot would not be able to move. Because they are connected by the tentacles, it allows them to stay connected even when it is moving. When all of the particles expand and contract in the right sequence, they can push and pull the whole group to a light source, which the robot can sense. Each particle broadcasts a signal that shares its perceived light intensity level with all other particles. For example, it can sense light intensities 1-10. Particles closest to the light register level 10 and farthest from the light register 1. Particles experiencing highest intensity (level 10) will expand first, and as they contract, level 9 expands, and so on. The mechanical expansion and contraction wave, a coordinated pushing and dragging motion, moves cluster toward or away from stimuli. The most interesting thing about these robots are the fact that there are so many little parts working together that when one particle stops working, the rest can keep the robot functioning. This is because the back and forth independent movements of working particles will continue to push each other even when some particles are not functioning. In other words, the broken part can depend on the rest of the working bots. Currently, locomotion is maintained even when 20 percent of the particles malfunction. The next step for the engineers is to miniaturize the components and make the robot out of millions of microscopic particles. The main problem with this is dealing with cells that are about the size of grains of sand. Watch the particles in action here!  Singapore is the most technologically advanced country in the world, and it is only becoming smarter. They spent a total of $1 billion on their smart city initiative in 2019. Here are a few of the most interesting innovations Singapore is currently developing and using. TRANSPORTATION The main innovation is the Vehicle to Everything, or the V2X for short. It includes two parts, which are the vehicle-to-vehicle (V2V) and the vehicle-to-infrastructure (V2I). Vehicle-to-Vehicle (V2V)

Other smart technology used in Singapore includes: Smart Cameras

ELECTRONIC TRANSACTIONS Electronic transactions have been continuously advancing in Singapore. 1. Paylah was created, which allows users to make payments via QR code. More than one million people use it, and it registers more than 50,000 transactions per day. 2. Paynow allows peer to peer transfer of money using the recipient's mobile number, in addition to the ability to to pay businesses, corporations, and government agencies. 3. GrabPay, an app that acts as a mobile wallet, is created. In addition, the Smart Senior Program is implemented which is a card that enables the senior to pay stores, restaurants, and bus transportation with cash rebates every 100,000 steps. |

Details

AuthorKatie Zelvin Archives

September 2020

Categories

All

|

RSS Feed

RSS Feed